By Pratik Agarwal, Director of Onboard Autonomy

Achieving safe, scalable autonomy is existential for the AV industry. The architectural choices developers make—modular pipelines, pure end-to-end learning, or a structured middle path—shape everything from data efficiency and interpretability to safety validation and public trust. Compound AI is emerging as an architecture that balances those demands: shaping autonomy by blending data-driven scale with safety and interpretability. While here we discuss our approach to solving 99% of everyday autonomous driving, in a related post, we also discuss research and emerging technologies to solve the long tail required for full general autonomy.

The autonomy architecture dilemma

Building systems that can drive at scale, explain their decisions, and meet stringent safety bars means balancing three tensions: data efficiency (how much data you need to reach a given performance level), interpretability (how well you can inspect and debug behavior), and safety performance (how well you can validate and certify the system). Historically, no single approach has delivered all three. That’s why the industry is converging on a new paradigm—Compound AI—that keeps the scalable benefits of end-to-end learning while reintroducing structure where it matters most.

The autonomy landscape: modular, end-to-end, and the gap

Three main paradigms define the recent evolution of autonomous driving stacks.

Modular systems

Achieving safe, scalable autonomy is existential for the AV industry. The architectural choices developers make in terms of modular pipelines, pure end-to-end learning, or a hybrid middle path shape everything from data efficiency and interpretability to safety validation and public trust. Compound AI is emerging as an architecture that balances those demands: shaping autonomy by blending data-driven scale with safety and interpretability. While here we discuss our approach to solving 99% of everyday autonomous driving, in a related post, we also discuss research and emerging technologies to solve the long tail required for full general autonomy.

The autonomy architecture dilemma

Building systems that can drive at scale, explain their decisions, and meet stringent safety bars means balancing three tensions: data efficiency (how much data you need to reach a given performance level), interpretability (how well you can inspect and debug behavior), and safety performance (how well you can validate and certify the system). Historically, no single approach has delivered all three. That’s why the industry is converging on a new Compound AI paradigm that keeps the scalable benefits of end-to-end learning while reintroducing structure where it matters most.

The autonomy landscape: modular, end-to-end, and the gap

Three main paradigms define the recent evolution of autonomous driving stacks.

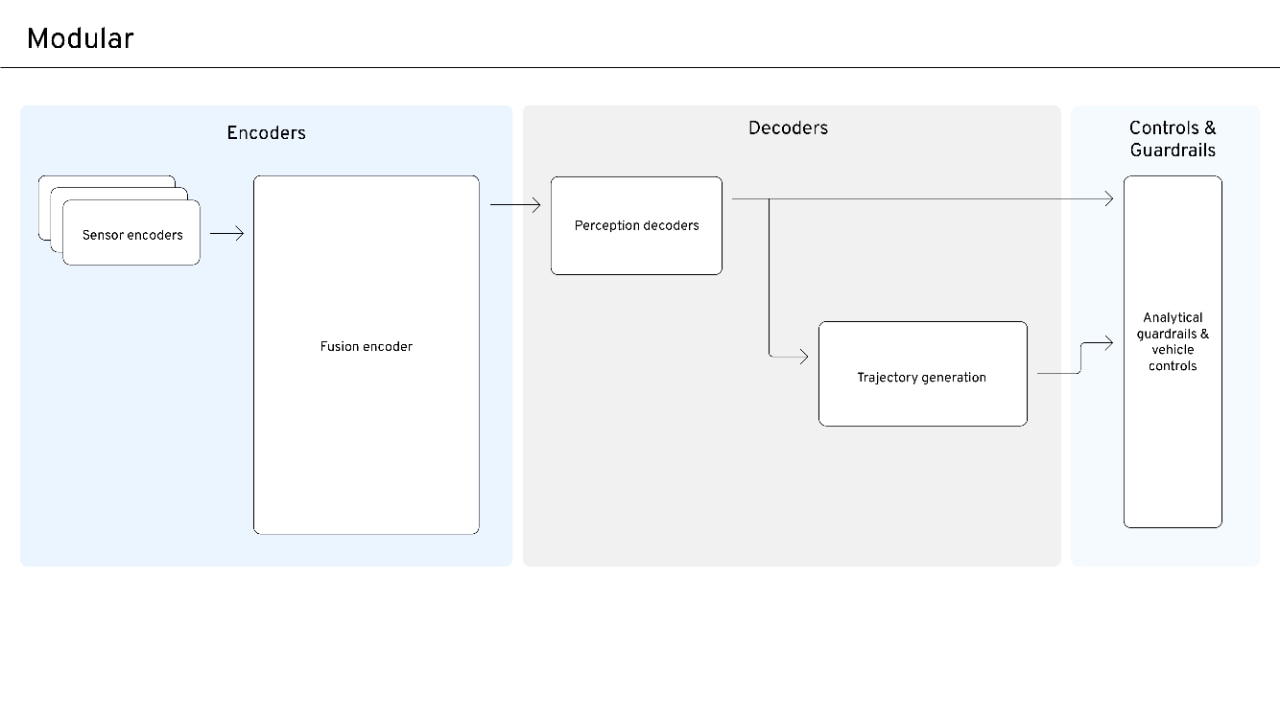

Modular systems

Classical autonomy stacks split the problem into explicit stages: perception and prediction (what’s in the scene and explicit prediction of the scene), a motion plan (where to go), and control (how to actuate). Each stage can be introspected and validated on its own.

Modular designs have low data requirements, making it feasible even when large datasets are unavailable. Its introspection is straightforward, as the intermediate representations are interpretable and easier to analyze. Validation is also relatively simple, since individual components can be tested at the module level. In terms of safety assurance, the system is strong because it relies on certifiable modules that can be independently verified. However, its generalizability is limited, meaning scalability and the ability to generalize new or broader scenarios and expanding to new domains and long-tail scenarios remain relatively low.

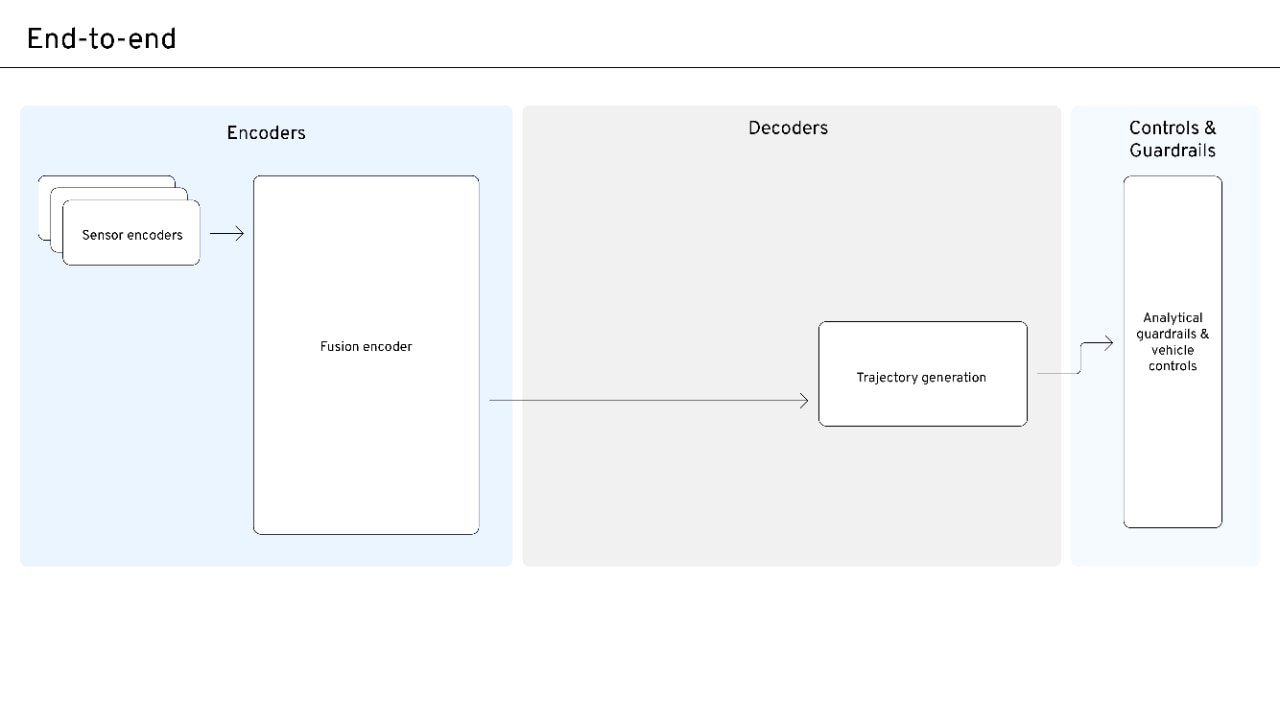

End-to-end systems

Pure end-to-end systems map sensors directly to control (or trajectory) with a single learned model. There is no information bottleneck—just a black box that scales with data.

The pure end-to-end approach offers high generalizability, as it can scale effectively with large volumes of unlabeled trajectory data and avoids the traditional perception-to-behavior bottleneck. However, it has high data requirements, relying heavily on large amounts of training data to perform effectively. Introspection is difficult because the internal representations are often opaque, making it challenging to interpret how decisions are formed. Validation is also difficult, as the system lacks clear component boundaries, which complicates systematic testing and debugging. Consequently, safety assurance is challenging under current validation frameworks, since traditional methods for verifying system reliability are harder to apply.

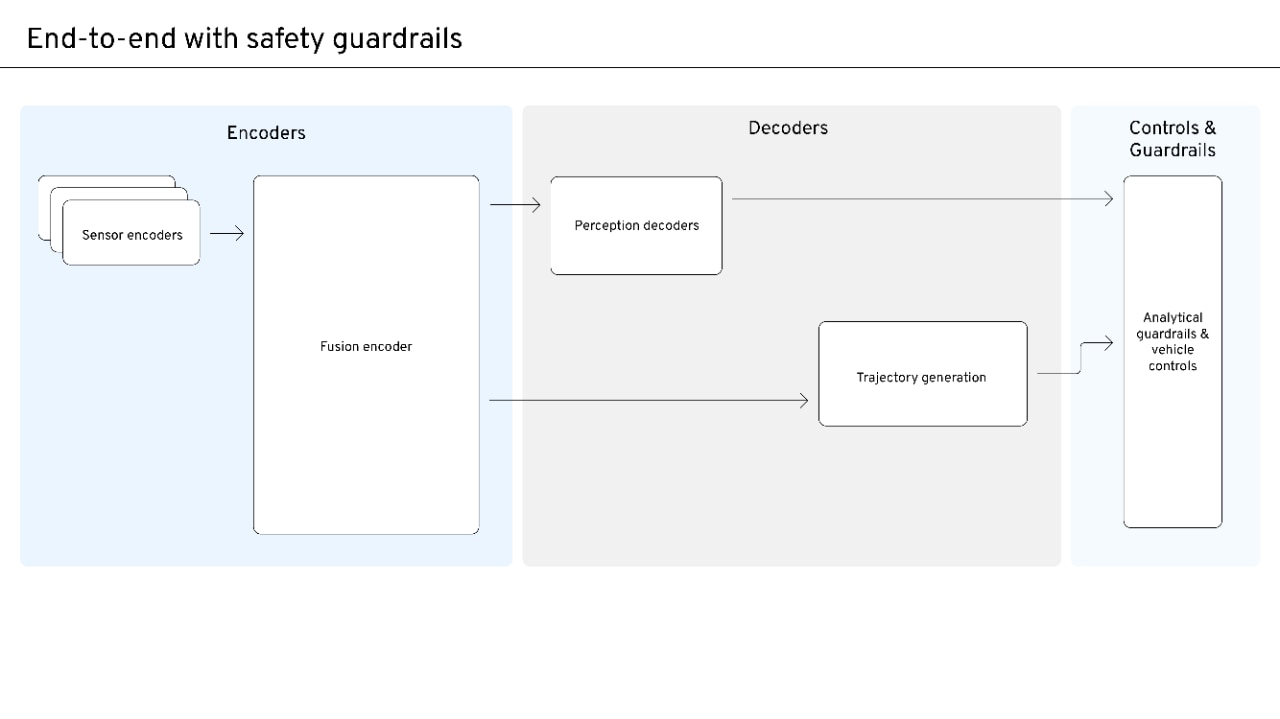

End-to-end with safety guardrails

A middle step adds constraints (e.g., physics, rules of the road) around an end-to-end model. This improves safety relative to pure end-to-end but does not fully address introspection, validation difficulty, or the high data required to meet performance bars.

However, introspection, validation, and safety assurance remain difficult. The internal processes of the system are still challenging to interpret, which complicates efforts to understand how decisions are made. This lack of transparency also makes validation more complex and poses challenges for ensuring safety within existing evaluation and verification frameworks.

To address these gaps, we need an architecture that keeps end-to-end-style scalability, while restoring sufficient structure for safety, interpretability, and a higher performance floor with less data. That architecture is Compound AI.

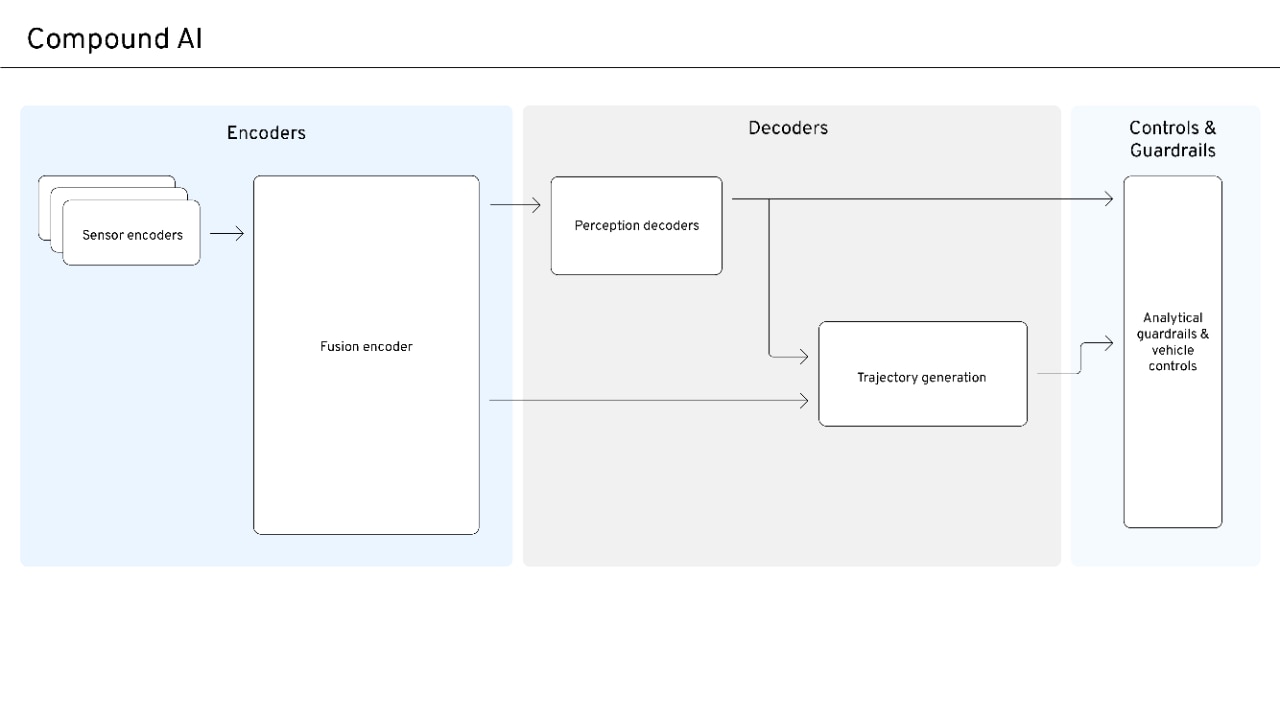

Compound AI

Compound AI keeps an end-to-end learning that scales but explicitly adds introspection, constraints, basic physics, and rules of the road into the loop. In practice, that means:

- Sensor and fusion encoders with shared representations that enable early fusion and end-to-end training. Training includes self-supervised objectives and can leverage pre-training foundation models, VLMs (vision–language models), and world models. Perception decoders that produce human-interpretable outputs, such as tracks and maps, can be leveraged by trajectory generation and guardrails.

- Trajectory generation that combines learned behavior through shared sensor and fusion encoders embeddings in addition to leveraging outputs from the perception decoders.

- Guardrails that act directly on trajectories (physics, drivable space, safety margins).

So, it's the same continuous learning story as end-to-end, but with structure where it matters for safety and interpretability. In short, it blends data demand while maintaining safety and scalability.

How structure and learning work together

Compound AI is an integrated design where structure and learning reinforce each other at runtime and in training.

The trajectory generation receives both perception decoder inputs (e.g., decoded tracks and map) and embeddings. Reinforcement learning (RL) or other cost/reward terms use perception for train-time alignment—e.g., rewarding progress and safety, or penalizing collisions and boundary violations. This raises the floor and keeps behavior aligned with explicit rules while still learning from data.

Training can be extended further by incorporating world models and vision-language-action (VLA) style models: world models for prediction and rollout; VLA for grounding high-level goals and language in perception and action. Modern reasoning models and multimodal foundation models can be leveraged in training (e.g., for better scene understanding, planning, or distillation) to push the performance ceiling higher while keeping the same Compound AI structure at deployment.

Why Compound AI is different from modular and end-to-end

- Compound AI versus modular: Modular stacks are safe and certifiable but don’t scale as well to new domains or long-tail cases. Compound AI keeps structure (perception decoder, guardrails, analytical guidance) so you retain safety and interpretability, while the shared encoder and backbone and trajectory generation scale with data—giving you both a certifiable story and a path to higher performance and broader operational design domains.

- Compound AI versus end-to-end: Pure end-to-end scales with unlabeled trajectory data and avoids perception-to-behavior bottlenecks, but it demands large data volumes to hit performance bars, offers poor explainability and debuggability, and makes validation and safety assurance challenging. Compound AI keeps end-to-end scaling (backprop from trajectories into a shared backbone, rich information flow) while adding perception decoder, analytical guidance, and guardrails. You get faster iteration (e.g., component pre-training, clearer attribution), better interpretability, and a path to stronger safety assurance without giving up the benefits of end-to-end learning.

Advantages of Compound AI

- Safety and interpretability: Analytical guardrails and human-readable perception outputs make behavior explainable to engineers and regulators. That supports both development and validation.

- Faster iteration: Component pre-training and clear interfaces let teams iterate on each component with a shorter feedback loop.

- Strong generalizability: The end-to-end backbone is preserved, so the system still scales with unlabeled trajectory data and avoids artificial perception-to-behavior bottlenecks. You get the floor-raising benefits of the structure without capping long-term scaling.

- Validation and debuggability: Structured outputs enable component-level testing and clearer attribution when something goes wrong. That can simplify the validation strategy and make the safety benefits more compelling to stakeholders.

One generalized system: Distilling strategy into product lines

A natural question after adopting a Compound AI architecture is how it maps to a product portfolio—from entry-level Advanced Driver-Assistance Systems (ADAS) through eyes-off and mind-off autonomy products. The answer is to treat the stack as one generalized system and distill all product lines from it, rather than building separate systems per tier or use case.

The strategy is to distill all tiers of ADAS and Autonomous Driving (AD) products from the generalized system:

- One stack, many products: The Compound AI stack is built as a superset of all capabilities and is continuously improved over time. Every shipped product is a distillation of this system—configured by capability subset, performance bar, ODD, and cost—rather than a standalone or parallel stack.

- Composition over duplication: Products can be composed from multiple capabilities at varying levels of performance and availability. Constraints (cost, consumer demand, validation process, sensor suite, etc.) determine how the generalized system is configured for each product line, not whether to build a new system.

- Learn once, apply everywhere: Data and learnings from one product or deployment flow back into the single generalized system. Improvements to that system then feed into every product distilled from it, driving consistency and continuous improvement across the entire ecosystem.

In practice, the same Compound AI architecture—sensor encoders, shared backbone, perception decoder, trajectory generation, and guardrails—can power everything from basic ADAS to driverless personal autonomous vehicles (PAVs). What changes per product is the level of capability exposed, the ODD, and the validation and safety bar, not the core architecture.

Compound AI is the state-of-the-art architecture for autonomy that keeps the scalability of end-to-end learning while learning and promoting safety, interpretability, and a higher performance floor. It enables faster iteration through structure and component-level work and continued scaling with data. By blending data demand with explicit structure—introspection, constraints, physics, and rules of the road—Compound AI supports deployment at scale with explainable, validated behavior intended to achieve stronger safety.